27 March 2024

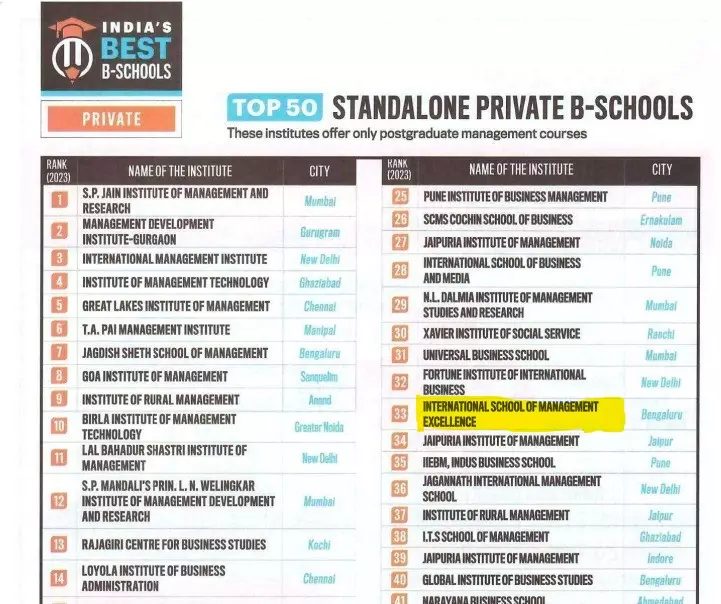

In one of the recent lectures at our institute, Internal School of Management Excellence (ISME) on data-driven decision-making, a question arose about the ethical use of data in the era of generative AI. This is not unanticipated, and I have encountered similar questions before. I believe it’s a pertinent issue, considering generative AI deals with trillions of data. But before delving into that, it’s crucial to understand the adoption of Gen AI and how this technology is transforming the world.

Gen AI has been around for some years but has gained prominence in the last couple of years. In its few years of existence, generative AI has done wonders for the industry and been beneficial to its stakeholders and users. As per the publicly available sources, the current market size of generative AI is around US$45 billion and is poised to grow to US$180–200 billion in the next 6-7 years

Here are a few use cases where Gen AI has been used and has proven to be beneficial in several countries:

- China: Alibaba employs GenAI to enhance personalized shopping experiences for its users. For instance, Alibaba’s Taobao app utilizes GenAI to suggest products to customers based on their past purchases and browsing activities

- India: Haryana utilizes Jugalbandi, a unique chatbot integrated with WhatsApp and powered by GenAI. This versatile chatbot handles tasks ranging from pension requests to college scholarship assistance. Capable of understanding multiple languages, Jugalbandi accesses information on government programs in 10 of India’s 22 official languages and 171 Government initiatives

- USA : Macy’s, a retail giant, implemented a generative AI system for product design. This system is supposed to make different versions of current designs or even come up with brand new ideas based on what customers like and current trends. This helps Macy’s make products that match the preferences of different groups of people and areas, which could mean they sell more stuff and spend less money on designing

- France: Dassault Systèmes (France), a major in 3D design software and services, has been leveraging generative AI for design optimization. The company utilizes generative AI to optimize designs for complex systems in various industries, including aerospace and shipbuilding. This allows them to find superior designs much faster than traditional methods, reducing development time and costs.

Although GenAI applications demonstrate significant advancements in various fields and regions, they are not immune to challenges, as is the case with any technology. Given the vast and unprecedented scale of datasets involved, the technology poses potential consequences for organizations, governments, and society due to its proliferation and the possibility of unethical practices.

Specifically, there are numerous challenges associated with GenAI technology, spanning from concerns about data quality and authenticity to the misuse of data, which is currently a pressing issue worldwide. Among these challenges, the most critical one, in my opinion, is the misuse of data, given its potentially immense impact, which is difficult to comprehend. I am highlighting a few real-life issues in data misuse and the kind of chilling impact it had-

Case 1:

The scheme involved deceiving company personnel using fabricated videos of high-ranking executives, including the Chief Financial Officer (CFO), in a video call. Initially, the perpetrators sent a fraudulent email posing as the CFO, requesting a confidential financial transaction. Subsequently, they arranged a video call with a targeted employee, displaying bogus videos of the CFO and other familiar faces to give credence to the request. Falling for the trick, the employee authorized money transfers amounting to a staggering $25 million.

This incident underscores the peril of deepfake technology in financial fraud, prompting concerns about the security of a company’s internal systems and the vigilance of its staff against potential threats.

Case 2:

A fake video of Infosys founder N. R. Narayana Murthy was circulating on Facebook. It’s made by altering a conversation from a past event (the BT Mindrush event) where Murthy talked about the Indian economy and Indian entrepreneurs. In this morphed video, he discussed quantum computing software developed by his team, claiming it could help stock market investors earn INR 2.50 lakhs on the first day itself. However, Facebook has flagged this video as false. A more terrifying fact is that the fake video tried to avoid detection by closely cropping around Murthy’s face to remove watermarks and other identifying marks from the original video. Thankfully, it was detected very early, and a public warning had been issued.

Case 3:

Indian film actress Rashmika Mandanna was the target of a deepfake video that went viral late last year, in October 2023. In this case, the deepfake showed her entering an elevator. However, the original video featured a different woman. The culprit behind the video was a techie who was arrested by Delhi Police in January 2024. He reportedly made the video to try and increase followers on a social media fan page he ran for the actress.

There are several such incidents around the world where organizations, individuals, and government governments have become victims and have fallen into the prey of deep fakes. Thankfully, any country’s security breach or any major cross-country issues haven’t been reported till now due to the misuse of the technology. Think of a situation where, in a doctored video, a country chief is directing it’s army generals to carry out an attack on another country. The thought itself is scary enough. Isn’t it?

Now the question is, can such things be controlled? The answer is definitely “yes.” For that, the government and businesses are working actively to combat such a menace.

- Government: Governments are actively playing a dual role concerning generative artificial intelligence (GenAI), serving as both facilitators and guardians. In their facilitative capacity, governments prioritize GenAI’s advancement through investments in research, education, and infrastructure, alongside funding initiatives to enhance GenAI skills and fostering collaboration between academia and industry. Moreover, they encourage the seamless integration of AI technologies across various sectors.

Simultaneously, governments act as protector by implementing robust regulations to ensure responsible and ethical GenAI utilization. These regulations address privacy, security, and potential misuse concerns. By setting clear standards and enforcing guidelines, governments safeguard individuals, businesses, and society from adverse GenAI impacts. Furthermore, to maintain a zero-tolerance policy towards data misuse, governments impose hefty penalties, exemplified by the European Union’s $900 million fine against Amazon for a data breach.

- Enterprise: Businesses are navigating the ethical landscape of generative AI (GenAI) through strategies such as scrutinizing data for diversity, implementing transparency measures, incorporating human oversight, and fostering collaboration to establish ethical guidelines, aiming for responsible GenAI use. Moreover, they are adding new positions and designations such as data protection officer, chief ethical officer, AI ethics manager, etc. By taking these steps, businesses can strive to ensure GenAI is used ethically and responsibly, minimize potential harm, and maximize its positive impact.

I am also optimistic that the government, businesses, and other stakeholders in GenAI technology will continue to work together to diminish the unethical use of data and technology. This collaborative effort is crucial, and technology is poised for fat growth in the future. More importantly, as we witness a decline inexploitation, I believe businesses and government entities will wholeheartedly embrace the technology and employ it in a more impactful and effective manner.

Questions to discuss:

- How can individuals play a role in promoting responsible development of Generative AI (GenAI) and reducing the dangers linked to data misuse?

- How can government and businesses collaborate more effectively to address challenges related to unethical usage of data?

- The blog ends on a positive note regarding the future of GenAI. Do you agree with this positive outlook? Why or why not?

- Imagine you are a leader in the field of GenAI. What specific actions would you implement to establish public confidence and guarantee the ethical advancement of this technology?